I have read many endless discussions about audio equipment. Topics include cables, lossless vs. lossy, compression algorithms, jitter, ultrasound, playback files from different places of a hard disk, power conditioners, etc.

I suppose the reason for the discussion focuses on a small perceived sound difference between each type, for example, a cable. The debate means that the difference is not obvious for everybody, or perhaps there is no difference at all.

So is this the end of high-end? Can we really get the audible improvement that is obvious for almost everyone in audio equipment now and in the future?

What is the aim of audio hardware?

When we discuss musical records of acoustic bands and orchestras, we basically want to hear the “natural” sound during audio playback. Here “natural sound” is audio similar in sound to what we hear in a concert hall or live music setting.

In this meaning “naturalness” is the degree of reconstruction in mimicking the ambiance and sound of a concert hall experience in a normal listening environment such as a headphone or speakers in a room.

However, the term “natural” is not as simple as it seems. There is no “natural” sound of a musical instrument. There is only the “natural” sound of a system [musical instrument + concert hall].

What is happening now?

In my opinion, modern hi-fi/hi-end audio devices are close to ideal in terms of the concept of low distortion.

Audio devices achieve a low distortion level in music transfer through a complete system: from a microphone to speakers in a listening room.

Audio equipment distortions, when a music signal is transferred from the microphone to the listening room.

The ideal system should have zero distortions across the chain. A signal that is received by a microphone and then radiated out to speakers should not have any changes. This is about harmonic distortions, flatness, or linearity of different kinds of responses. Even cheap modern digital audio devices provide good enough sound. Expensive devices sound great.

There are many endless discussions on whether we can hear differences between this signal and the components of an audio system. Are there quirks in the settings? Is the signal modded or not?

I said above “close to ideal”, because properly measured differences for human ears may be either enough small, or absent (including imaginary differences). Otherwise, there would be no discussions.

We can try to get obvious sound differences for all classes of audio devices. Even for the best ones.

The Physical Process

The sound of a live band

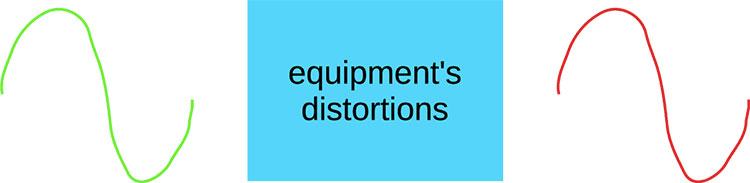

On the stage, several omnidirectional audio sources (musical instruments) are placed. Acoustic waves are spread in an infinite number of directions around the sources.

Omnidirectional acoustic sources spread acoustic waves (along rays) in all directions around

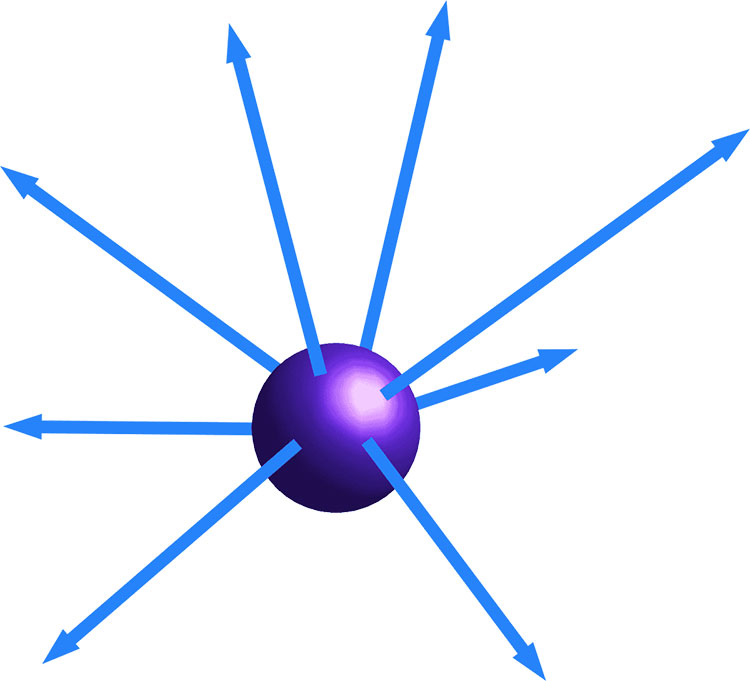

This infinite number of acoustic rays are bounced/adsorbed from/in surfaces of the concert hall. Bounced rays are often re-bounced again.

Bouncing of acoustic rays, radiated by musical instruments, into a concert hall

All these direct bounced and re-bounced rays ‘interfere’.

What Is Interference?

Interference is the sum of oscillations in the point of space (concert hall, listening room).

Oscillations are distributed along rays, as shown above. The lengths of the rays are different. So waves pass away from the sound source (musical instrument) to the point with different time delays. And the waves to the point may be as gained or reduced mutually.

Gaining and reducing is not the point of this article. The main issue is the correct recording of interference in a point of space in a concert hall and the identical reproducing of the interference in a target point of space in a listening room. The listener’s ear position is usually considered the most critical point.

A wave field is created in a concert hall or listening room. The field is variable depending on the point of focus in the room. Because all these rays interfere differently at each point of a concert hall. The point here that must be considered is located in all 3 dimensions. Movement in each dimension changes the interference.

The sound of a recorded band

The sound of a live band is captured by microphones.

There are 2 main approaches:

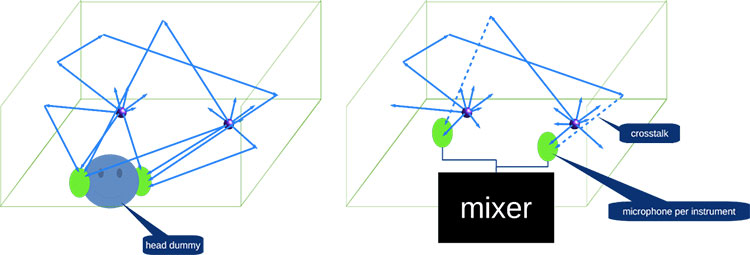

- 1 microphone per 1 instrument/group instruments/drum unit. Wave field formed artificially, primarily in the mixing and partially in post-production.

- Microphones in a human head model. The sound is captured similarly to human ears and distributed to record listeners without any processing.

Music recording methods: head dummy and microphone per instrument

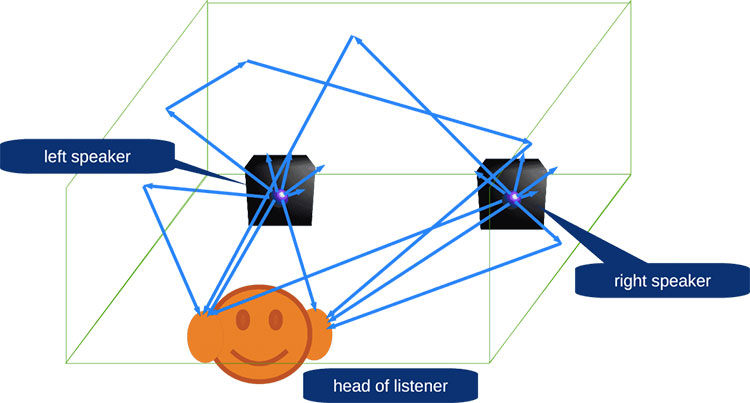

The recordings are radiated from speakers with some distortions by the apparatus. Speakers are omnidirectional sources of acoustic waves. There are direct acoustic rays and bounced and re-bounced ones also interfere.

Therefore recordings that are generated via speakers create a different sound field to that of the original live band in a concert hall. Even correctly captured records will suffer from this. Even more so after the absolute elimination of the apparatus distortions.

Therefore, in my opinion, for the current level of audio equipment, we should discuss the quality of acoustics wave field reproduction, instead of traditional sound quality such as distortions (harmonic, amplitude distortions, and others).

A sound (acoustic) field is a sound hologram. Like an optical hologram (3-D visual image) a sound field is an acoustic wave field that our ears can perceive.

Implementation issues

A sound hologram is really the aim of such a 3-D system. As a rule, these systems are multi-channel.

There are several issues:

- Proper sound capturing;

- Impact of reflections and re-reflections on speaker’s audio playback accuracy;

- Converting the hologram of a listening point of a concert hall to the hologram in the location of a record listener’s ears in a listening room.

Capturing

If we want to capture sound in one listening position in a concert hall we can use a head dummy with microphones in the head dummy’s “ears”. It is a non-trivial thing, as seems at first sight. However, the human head is a complex system for receiving acoustic waves. This is not simply 2 isolated microphones over the ears.

Let’s imagine that we ideally modeled a ‘head’ as a sound wave receiver. But there is no single ideal model because different peoples have different “acoustic wave receivers” (further – “head receiver”). If we can model the head receivers of famous composers, musicians, and trained listeners, we can release different editions of one concert (album)!

In the first approach, I see a “head receiver” as a bi-microphone system with two outputs. Using microphones with a pattern similar to the human ear is desirable. However, it may be not enough for a complete simulation of the “live” listener’s ear system.

A microphone pattern is the sensitivity of a microphone to sound that is in all directions – a 3-D space.

Speaker playback issues

A speaker is an audio source with a complex pattern. I.e. the speaker radiates sound rays with different intensities in different directions. All these rays bounce and re-bounce off many different surfaces in a listening room.

Direct acoustic rays from left and right speakers, bounced and re-bounced rays are mixed

It distorts the reproduced sound hologram even if it is recorded correctly.

Some modern audio systems system use virtual sound sources to “draw” it in the space of a listening room via a speaker array (multi-channel system). The array is not a hard structure. Systems can use different kinds of speaker arrays.

However, it is the synthesis of an audio object. It is not a sound hologram captured in the concert hall. Though the synthesis is a good decision for electronic music and cinemas. I.e. for cases, where the sound field may be artificially constructed.

I suppose this is the reason why some listeners prefer “simpler” stereo systems.

Headphones playback issue

In headphones, a driver works in the immediate area of the ear, unlike a speaker system.

However, it is not an ideal system either. Despite short distances between the ear and the driver, a headphone is a complex acoustic system, that also distorts a sound hologram.

As a radiation source there works not only a driver but a cup too. These oscillations from the driver are mechanically distributed to all elements of the cup and the elements oscillate and spread acoustical waves.

The ear is a complex system that receives sound from all directions. I think here more correctly discusses the head as a sound receiver. However, headphones can’t reproduce the complete acoustic environment of a head.

Besides, in concert hall acoustic waves and vibrations impact the human body. However, it depends more on the music genre (classical, rock, dance, etc.). The body impact is out of a headphone’s ability also.

I have seen pictures where speakers are divided by acoustic shields to reduce crosstalk between the left speaker and the right ear and vice versa. However, it is not the best way because the bouncing issue isn’t resolved. The sound hologram is also distorted there.

Summary

I suppose that we have the potential ability to improve sound quality in future audio devices. It can be implemented by capturing and producing a concert-hall sound field (sound hologram, 3-D sound) in a listening room.

Currently, the closest way to create a sound hologram is a sound-field capturing of a listening point in a concert hall via a 2-microphone head dummy and playing it back via headphones. However, it will not mimic the impact of sound on a human body, including the complete human head.

Speaker systems have this acoustic-ray bouncing issue that significantly distorts the playback of the recorded sound field in multi-channel.

Future sound-field playback devices should be easy to use like stereo systems.

Acknowledgments

This article was first published in June 2016 on the Sample Rate Converters’ main website.

Permission has been provided by Yuri for us to reprint with some additional notes and amendments for Headfonics readers. However, copyright remains the property of Yuri and permission must be gained for any further republication.